Enterprise-grade sensors with AI smarts

Our AI-powered sensors deliver accurate people counting and movement tracking with enterprise-grade reliability and privacy. Deploy in minutes, scale globally.

Innovative

Advanced technology and user-friendly features.

The HoxtonAi sensors are designed to provide rock solid data without compromising privacy.

S1 Overhead Sensor

For most people counting or occupancy use cases. With wide angle variant for larger spaces.

Feedback Kiosk

Tablet-based feedback kiosk with built-in microphone. Visitors tap to start and speak. Use a pre-configured kiosk enclosure or bring your own iPad or Android tablet.

Off-the-shelf cameras

Use your own ONVIF-compatible cameras. Get in touch with us for the latest compatibility list.

01 — People Counting

The S1 Overhead Sensor

AI-powered overhead counting for entrances, zones, and occupancy — engineered and assembled in the UK.

Designed in London

Small sensor. Big intelligence.

The S1 overhead sensor is designed to be installed above an entrance and is the perfect choice for most deployments. Engineered and assembled by our team in the UK — every detail is designed to disappear into your space while delivering enterprise-grade data.

Impossibly compact

Just 22.9 × 7.1 × 4.1 cm and only 260 g. Mounts flush to any ceiling and practically vanishes once installed — your customers will never know it's there.

Sips power

Powered over a single PoE ethernet cable (802.3af, 37–57 V DC, Class 2) — no separate power supply, no electrician, no fuss.

Every connection covered

PoE ethernet, standard ethernet, or Wi-Fi (2.4 GHz & 5 GHz, 802.11b/g/n/ac) — pick whatever your site already has. Swap without swapping hardware.

Privacy on-device

Images are anonymised directly on the sensor before anything leaves the device. No faces, no identifiers — ever.

15-minute install

Two screws, one cable, a guided setup wizard. From unboxing to live data in under fifteen minutes — no specialist tools required.

Designed & built in the UK

Engineered by our hardware team in London and assembled in-house. Every unit is tested, calibrated, and shipped directly from the UK.

Wide-angle variant

For larger entrances or low ceilings, the wide-angle S1 covers more area from a single mounting point.

98%+ accuracy

On-device anonymisation and cloud-based AI processing deliver enterprise-grade accuracy with complete privacy.

Why 98%+ accuracy

Counting based on journey prediction, not simple line crossings

Most people counters fire a +1 the instant something crosses a beam or line. That's fast — but it's also why they overcount. Hoxton works differently: it uses an overhead view to recognise a complete in-view journey and only counts when the journey matches a real entry or exit.

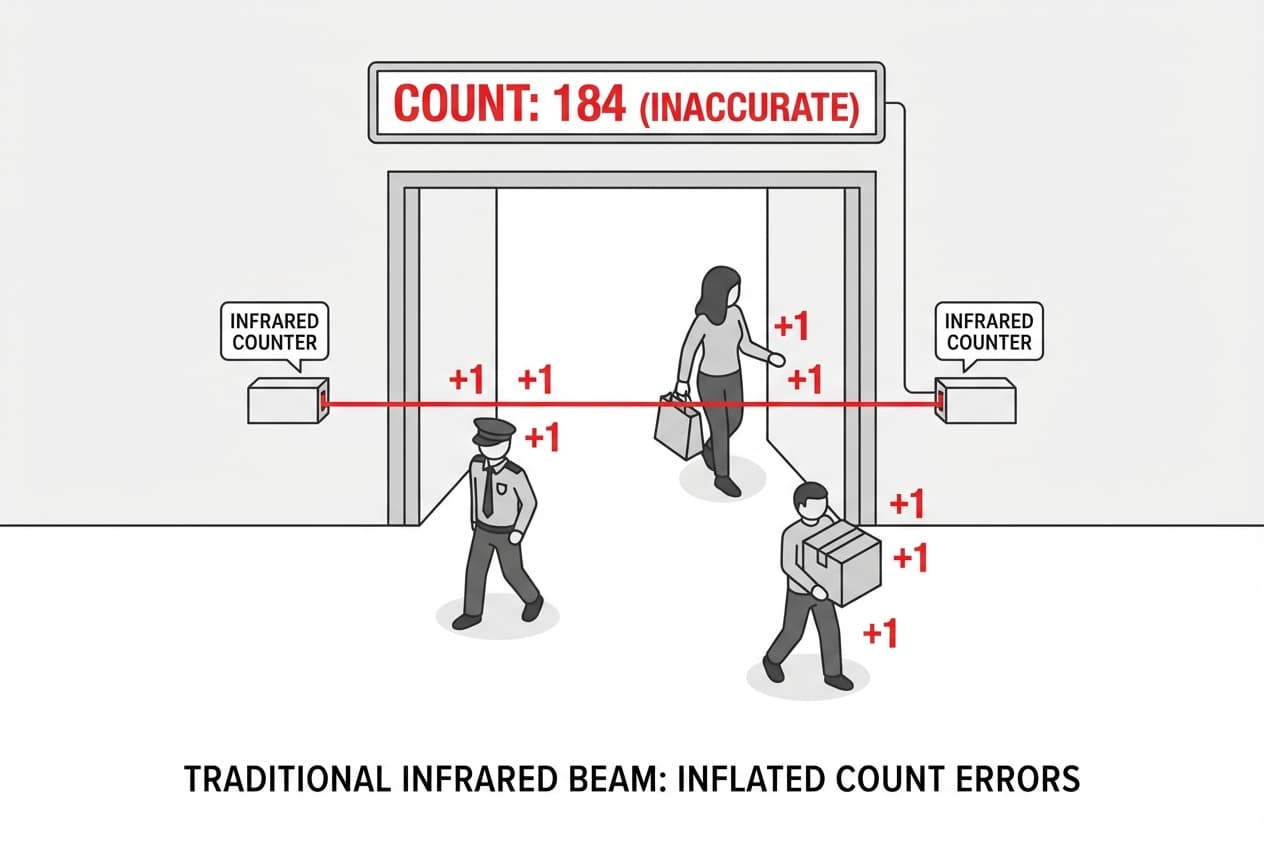

Traditional counters

A single trigger = a count, every time the line is crossed.

- ●Security guard pacing near the doorway? Counted repeatedly.

- ●Customer steps in, hesitates, turns back out? Often counted twice.

- ●Staff working near the entrance? Every pass inflates the number.

Result: counts drift upward anytime there's “doorway noise”.

Hoxton AI directional event counting

A count is only created when the system confirms a genuine entry/exit event.

- ●Understands a person's in-view journey from arrival to departure.

- ●Someone loops, pauses, or changes their mind? No false extra counts.

- ●A count only registers when they arrive on one side and leave on the other — a real entry or exit.

Result: accurate footfall that matches what actually happened, not how many times a line was crossed.

How directional event and journey prediction works

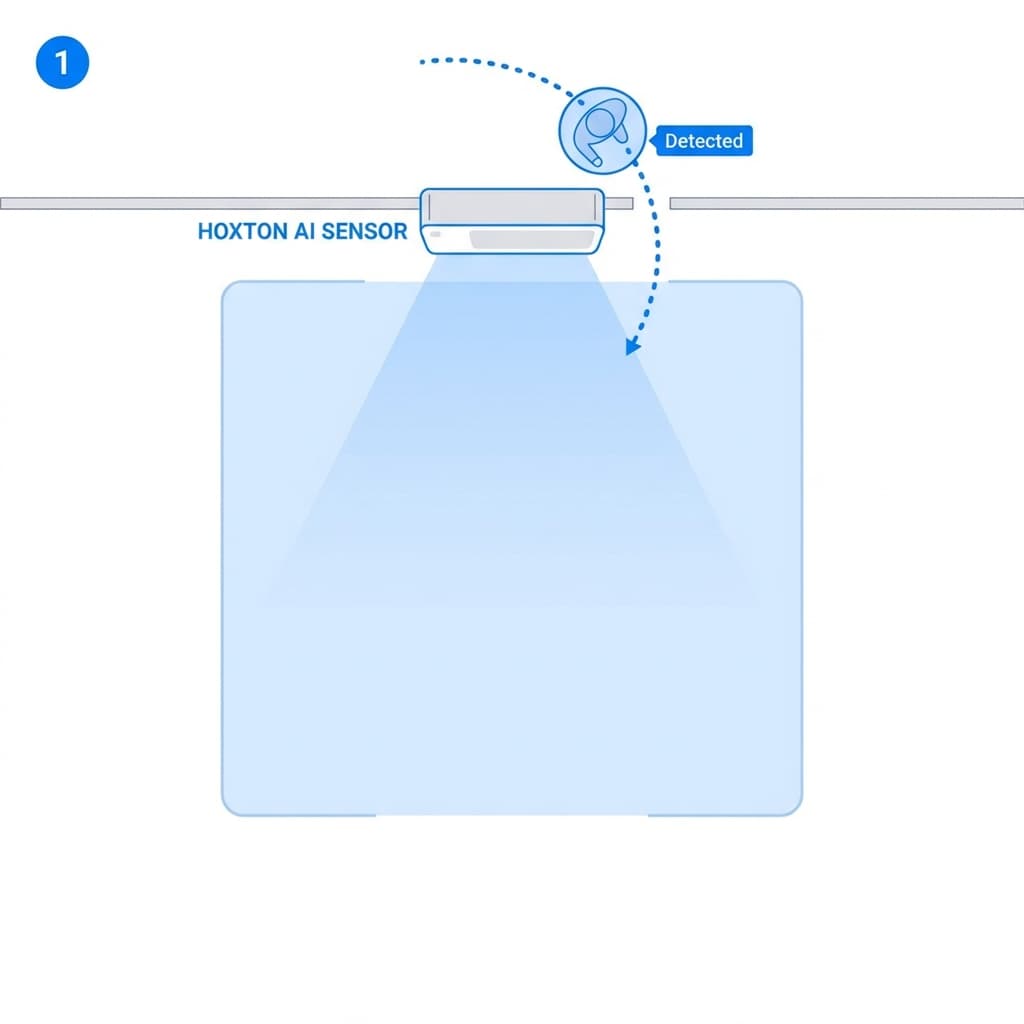

Detect

Person enters the field of view

A person is detected as they arrive in the overhead zone.

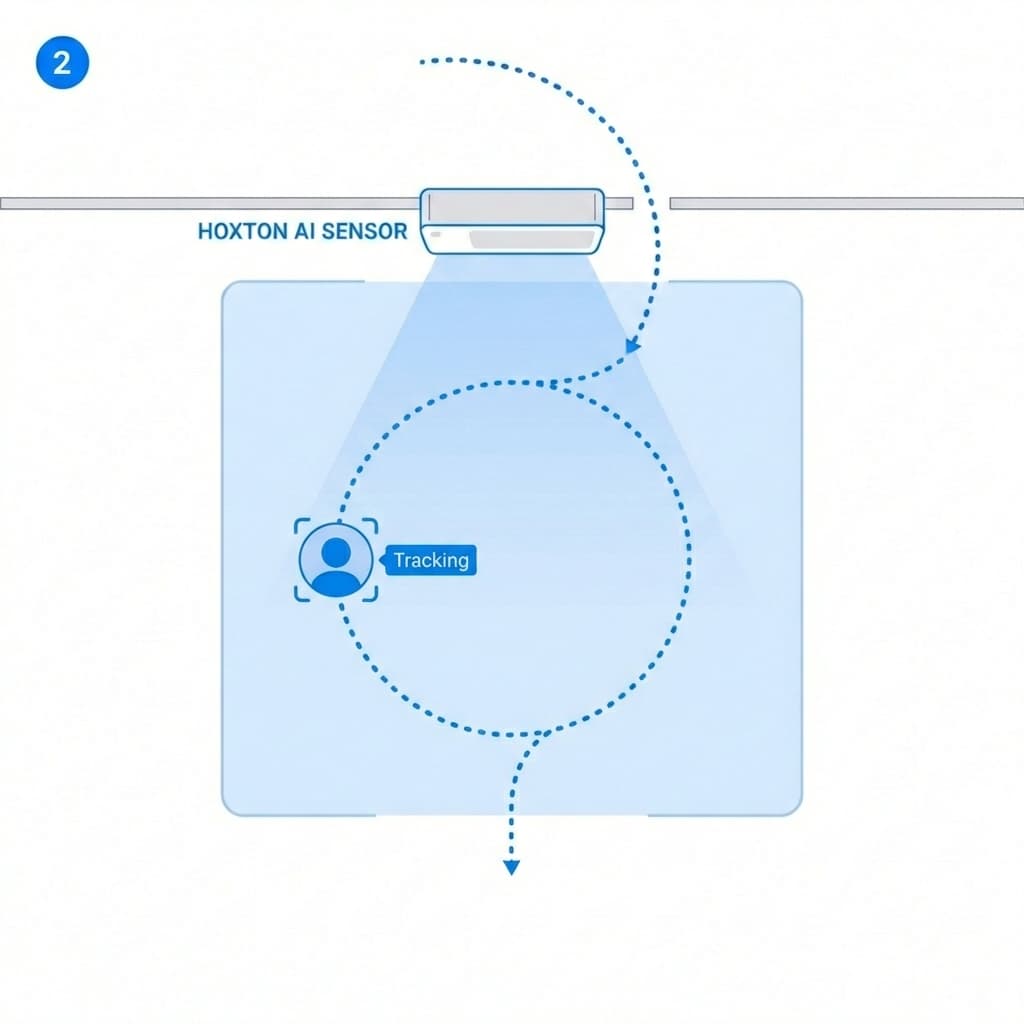

Interpret

Movement is understood in context

The system evaluates whether the movement indicates entering, exiting, or lingering/turning back (including loops and hesitation).

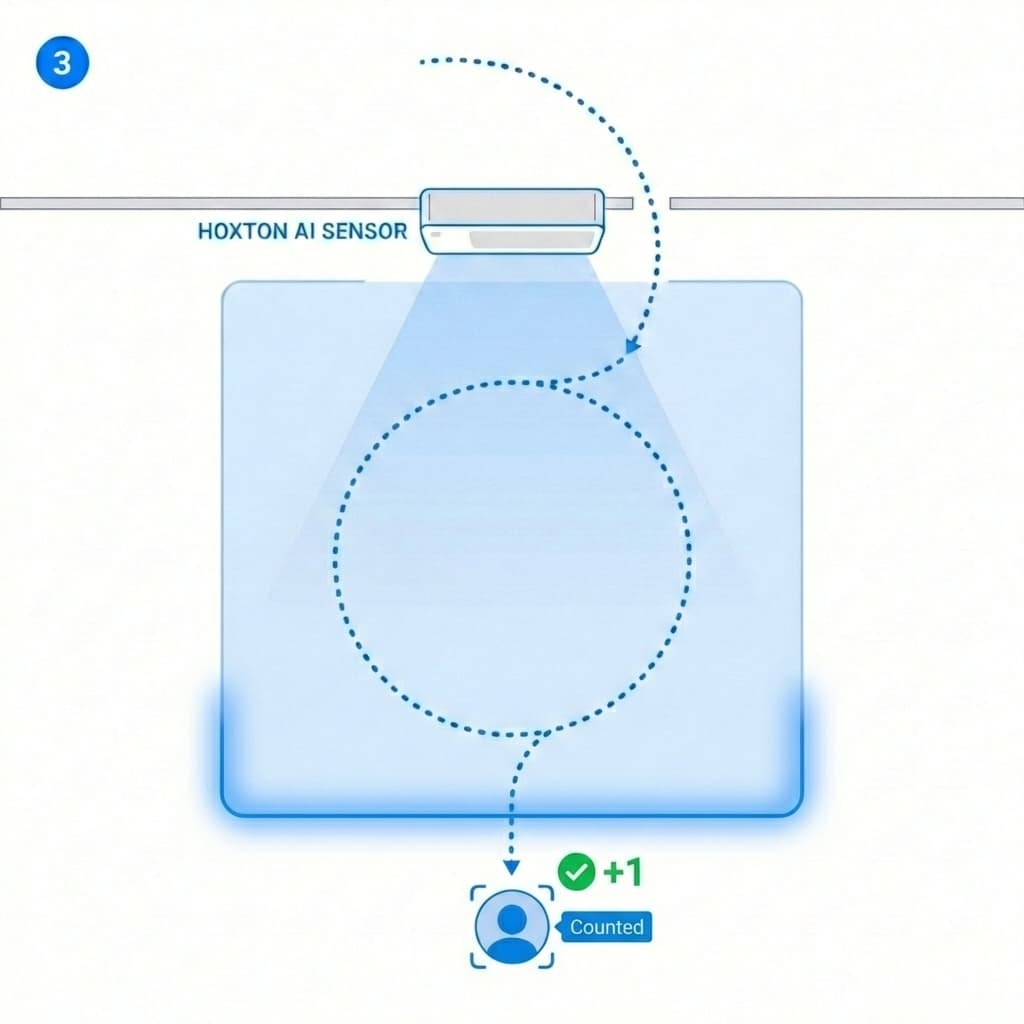

Confirm

Count only when the journey is complete

A count is recorded only when the person leaves the zone in the opposite direction — confirming a true entry/exit event, once.

02 — Visitor Feedback

Feedback Kiosk Hardware Options

Choose the setup that fits your venue — a pre-configured kiosk enclosure or bring your own tablet.

Feedback

Kiosk Enclosure Option

A pre-configured tablet in a floor-standing or counter-top enclosure. Visitors tap to start and speak their feedback. The built-in microphone captures their response, then displays a thank-you message. Multiple enclosure configurations available.

Customisable Design

Choose from floor-standing, counter-top, or wall-mounted enclosure options.

On-Screen Prompts

Get feedback on what's most important to you by asking specific questions on the screen.

Bring your own device

Run SaySo on any tablet

The kiosk enclosure is optional. The SaySo app runs as a standalone app on any Android tablet or iPad from 2021 onwards — no proprietary hardware required. Download it, log in, and start collecting voice feedback immediately.

Android tablets — any device with a microphone, 10" recommended

iPads from 2021 onwards

Pair with a kiosk enclosure for a polished setup

Setup in minutes, managed from anywhere

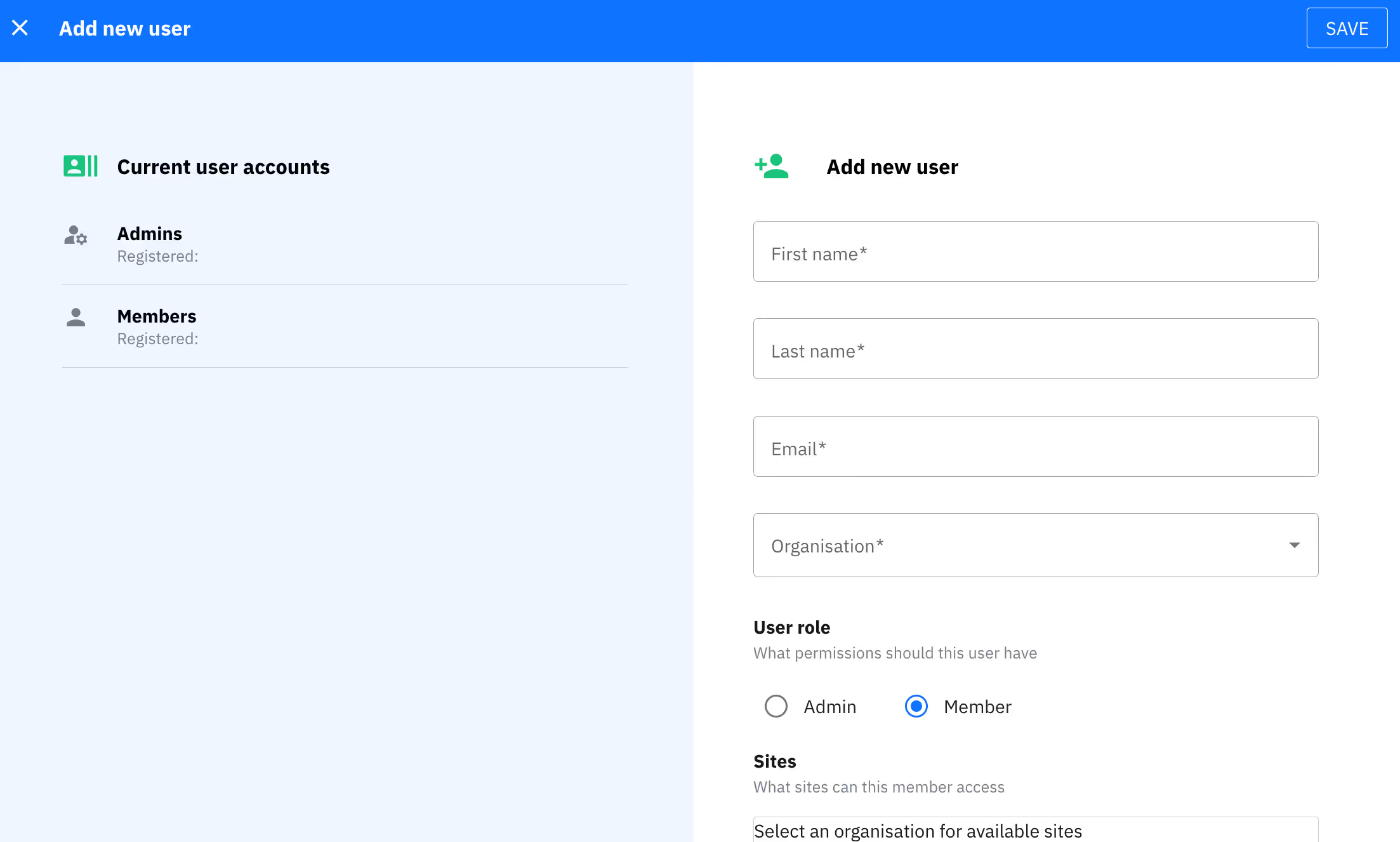

Every sensor comes with access to Control Room — our cloud platform for setup, monitoring, and team management. PoE, WiFi, or ethernet — choose what suits your site.

Comparing sensor technologies? Read our complete buyer's guide.

FAQs

Discover answers to common questions about our HoxtonAi sensors and their functionalities.